Imagine you signed a 24-month enterprise AI agreement on Monday, locked to one model lab for all your AI implementation needs.

Then yesterday happens. GPT-5.5 drops claiming to beat Opus 4.7 on reasoning and leading Terminal-Bench 2.0. Today, DeepSeek V4 Pro drops at roughly one-tenth the price of either when you factor in cached inputs, within a few benchmark points on SWE-bench Pro and ahead on sub-tests like LiveCodeBench and Codeforces.

Workflows built inside a model provider's application are locked to their roadmap and their pricing. Not the priorities of your business.

What two frontier model launches in 48 hours revealed

GPT-5.5 leads Terminal-Bench 2.0 at 82.7 versus V4 Pro's 67.9 and claims the top reasoning score over Opus 4.7. V4 Pro Max leads LiveCodeBench and Codeforces. Opus 4.7 still tops SWE-bench Pro at 64.3. V4 Pro output runs about $3.48 per million tokens against Opus 4.7 at $25 and GPT-5.5 at $30, and the gap widens to roughly 1/8th and 1/10th with cached input.

How Cursor taught engineers to stop betting on one AI lab

Engineering teams already solved this. They stopped betting on a lab and started betting on the editor that sits between all the labs.

Cursor is that editor. Every frontier model is a dropdown. Switching cost per task is zero. When a new model ships the engineer tries it the day it lands. If it's better, they move. If it's worse, they don't. Cursor is now used by 70% of the Fortune 1000. The labs compete for time inside it, which keeps every one of them honest on price and quality.

Workflow automation needs the same layer. Not a different flowchart tool. A different relationship between the workflow definition and the model that runs it.

The rewrite tax teams pay when locked to one AI provider

If your automations call one lab's API directly, or sit on an orchestration tool that hardcodes a single provider, you cannot capture the gains from either of this week's releases without a rewrite. A recent Zapier survey found 58% of enterprise AI vendor migrations either failed or took far more effort than expected, and nearly three-quarters of enterprise leaders said losing their current AI vendor would disrupt day-to-day operations.

So most teams skip the migration. They keep running last year's model choice on this year's workload. The tax is worst on the workflows you run most. A 10,000-row enrichment job that ran fine on Opus last quarter is now paying seven to ten times the cost it needs to for output a cheaper model would produce. A daily pipeline digest that genuinely benefits from Opus's reasoning is still worth the spend. The problem is that a single-lab contract gives you no way to treat those two workflows differently.

What model-portable workflows look like in practice

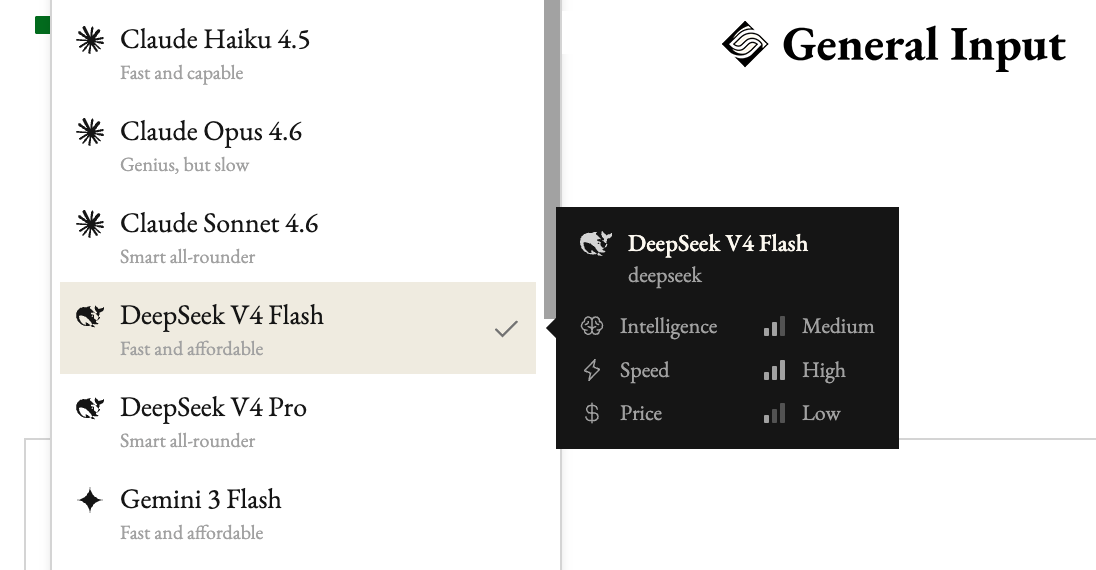

On General Input every workflow is model-portable by default. You describe the workflow in plain English. At run time you pick the model. Per template, per run, per tier inside a single run. Credentials for each lab are stored once, encrypted at rest, injected at call time, and stripped before any output reaches the model that's generating text. The workflow file is the same whether it calls Opus 4.7, GPT-5.5, or DeepSeek V4 Pro.

That means the 10,000-row enrichment job can run on DeepSeek V4 Pro for a few dollars instead of forty. The daily pipeline digest can stay on Opus because the reasoning matters and it runs once. The ambiguous-ticket escalator can classify cheaply and route the hard cases to whichever model you trust most this week. When the next frontier model drops next month, you add it to the list and keep going.

The labs will keep leapfrogging

They always do. The release cycle is shorter than the useful life of any recurring workflow, and that will stay true. Spend your energy finding the right workflow for your business. The model underneath will keep getting faster and cheaper without you.

DeepSeek V4 and V4 Pro are live in General Input today: pick either from the model dropdown on any workflow.